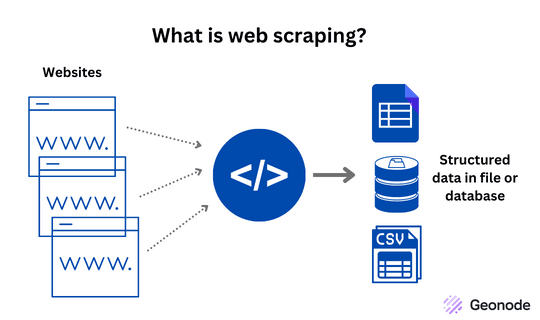

Large-scale data collection on the web requires more than just a crawler or scraping script. Modern websites actively detect automated traffic and block repeated requests from the same IP address. To operate reliably, scraping systems must use a well-designed proxy infrastructure for web scraping that distributes requests across multiple IP addresses.

A complete proxy infrastructure typically includes HTTP proxies, SOCKS5 proxies, and proxy pool management systems. These components work together to hide the origin of requests, rotate IP addresses, and prevent scraping systems from being detected or rate-limited.

In this guide, we explain how proxy infrastructure works and how it supports scalable crawling systems. If you want to understand how proxies integrate into a full crawling architecture, you can also read our pillar guide on web scraping infrastructure in the article Web Scraping API.

Why Proxy Infrastructure for Web Scraping Matters

When a crawler sends too many requests from a single IP address, websites quickly detect the pattern and apply defenses such as:

- IP blocking

- rate limiting

- CAPTCHA challenges

- traffic fingerprinting

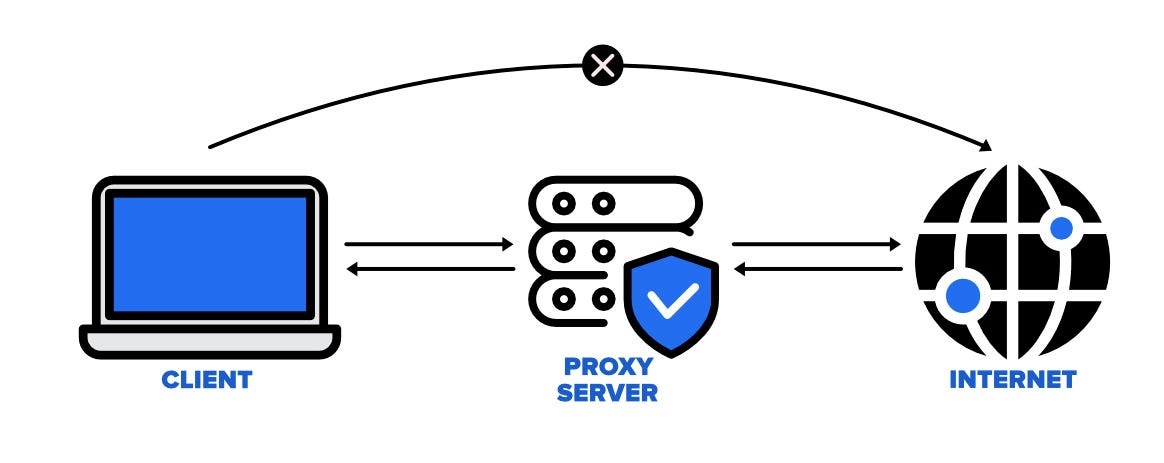

A proxy infrastructure for web scraping solves this by routing requests through many intermediate servers. Instead of a crawler appearing to originate from one machine, requests appear to come from multiple locations and networks.

This approach improves:

- crawler success rate

- scraping speed

- IP anonymity

- infrastructure scalability

Proxy systems are therefore a core component of any large-scale crawling platform. They are often integrated with distributed crawlers and scheduling systems as described in the Web Crawler Technology Guide.

HTTP Proxies in Proxy Infrastructure for Web Scraping

HTTP proxies are the most commonly used proxy type in scraping systems. They operate at the HTTP protocol layer and forward web requests from a client to a target server.The HTTP protocol used by proxies is formally defined in the IETF HTTP specification.

Basic workflow:

- Scraper sends request to proxy

- Proxy forwards request to target site

- Target site returns response to proxy

- Proxy returns response to scraper

Because the target website only sees the proxy’s IP address, the scraper’s real IP remains hidden.

HTTP proxies are particularly useful for:

- website scraping

- API crawling

- large-scale data extraction

- rotating residential proxies

They are easy to configure in most scraping frameworks and HTTP clients.

If you want a deeper explanation of how HTTP proxies work internally, see the detailed guide:

SOCKS5 Proxies in Proxy Infrastructure for Web Scraping

While HTTP proxies work at the protocol level, SOCKS5 proxies operate at a lower network layer, forwarding raw TCP connections. This makes SOCKS5 much more flexible.

Advantages of SOCKS5 proxies:

- supports multiple protocols

- handles TCP and UDP traffic

- provides stronger anonymity

- better compatibility with complex scraping tools

SOCKS5 proxies are often used when crawlers require:

- non-HTTP protocols

- advanced networking control

- bypassing strict firewall filtering

- high anonymity requirements

Because SOCKS5 does not modify request headers like HTTP proxies sometimes do, it is also harder for websites to detect proxy usage.

A full technical explanation can be found in:

Proxy Pools for Proxy Infrastructure for Web Scraping

When scraping at scale, managing individual proxies manually becomes impossible. Instead, production systems rely on proxy pools.

A proxy pool is a system that:

- maintains thousands of proxy IPs

- automatically rotates them

- checks proxy health

- removes blocked IPs

- balances traffic load

Typical proxy pool workflow:

- crawler requests proxy from pool

- pool returns available IP

- crawler sends request through proxy

- pool tracks success/failure

- blocked proxies are removed or replaced

This automated rotation significantly reduces the risk of detection.

Proxy pools are especially important when dealing with websites that aggressively block scraping traffic. Techniques for avoiding bans and handling blocked IPs are explained in detail here:

Resolve IP Blocking in Web Scraping

Best Practices for Proxy Infrastructure for Web Scraping

Building a reliable proxy infrastructure for web scraping requires more than just buying proxy servers. Effective systems include multiple layers of monitoring and automation.

Key best practices include:

Use large proxy pools

A larger IP pool reduces the likelihood of repeated requests from the same address.

Monitor proxy health

Automated checks should detect:

- latency

- connection failures

- blocked IPs

Combine proxy types

Using both HTTP proxies and SOCKS5 proxies increases flexibility and compatibility.

Rotate proxies intelligently

Rotation strategies can include:

- request-based rotation

- time-based rotation

- session-based rotation

Integrate with crawler infrastructure

Proxies should work alongside distributed crawlers, schedulers, and data pipelines.

A broader explanation of production scraping architectures is covered in our pillar articles:

Conclusion

A reliable proxy infrastructure for web scraping is essential for any serious data collection system. HTTP proxies, SOCKS5 proxies, and proxy pools each play a specific role in maintaining anonymity, distributing requests, and preventing IP blocking.

By combining these technologies with distributed crawlers and scraping APIs, organizations can build scalable systems capable of collecting large volumes of web data.

To learn more about building full scraping platforms, explore our guides on Web Scraping API and Web Crawler Technology.